Role:

Lead UX & Research Strategist, Concept Designer.

Timeline:

12 Weeks

Tools:

Arduino, C++ (ArduinoFFT), Secondary Research, Fusion360.

Collaborator:

Ben Ascough (Technical Development & 3D Modeling).

At a Glance

The Problem

Existing assistive alert devices for the hearing-impaired community are often cost-prohibitive, heavily reliant on visual attention, or locked into proprietary ecosystems (like Apple or Bellman & Symfon).

The Solution

Loom is a conceptual, Arduino-based wearable designed for genuine independence. It acts as a standalone device that detects critical environmental sounds (alarms, horns, doorbells) and translates them into real-time haptic feedback.

My Core Contribution

Beyond hardware assembly, I led the UX strategy and developed two distinct "Haptic Language Models" (a Morse-code approach vs. an intuitive sensory approach) to translate non-visual cues into physical sensations.

The Outcome

Over 12 weeks, we built a functional, testable physical prototype. Because initial outreach to charities was delayed , this prototype now serves as a powerful "Strategic Provocation" to secure funding and facilitate rich, hands-on co-design sessions with the hearing-impaired community.

Overview & Strategic Problem

Individuals with hearing impairments face daily anxiety regarding environmental awareness, missing critical cues like fire alarms, approaching vehicles, or doorbells.

A detailed competitor audit revealed a highly fragmented and inaccessible market. Existing solutions force users to choose between high-cost mainstream devices (like the Apple Watch) or proprietary, ecosystem-dependent systems (like Bellman & Symfon) that do not function independently outdoors.

The Goal: Streamline how people interact with machines by creating a standalone, affordable wearable that uses sound recognition as an input and translates it into real-time haptic feedback.

Navigating Research Constraints

A Provocation for Co-Design

Inclusive design requires direct engagement with the community it serves. At the project's onset, I initiated contact with hearing loss charities, including the National Deaf Children's Society (NDCS) and RNID, to build a foundation of lived experiences.

Due to a lack of timely responses, the project's strategy had to pivot. Rather than halting development, I repositioned Loom as a Strategic Provocation, a functional, testable prototype designed specifically to secure future funding, stakeholder buy-in, and facilitate rich co-design sessions.

To bridge the immediate gap, I developed an empathy map based on secondary research and observation to guide our initial UX assumptions, mapping out user anxieties regarding safety and the desire for independence without reliance on a smartphone.

Market Gap Analysis

Designing Beyond the Ecosystem

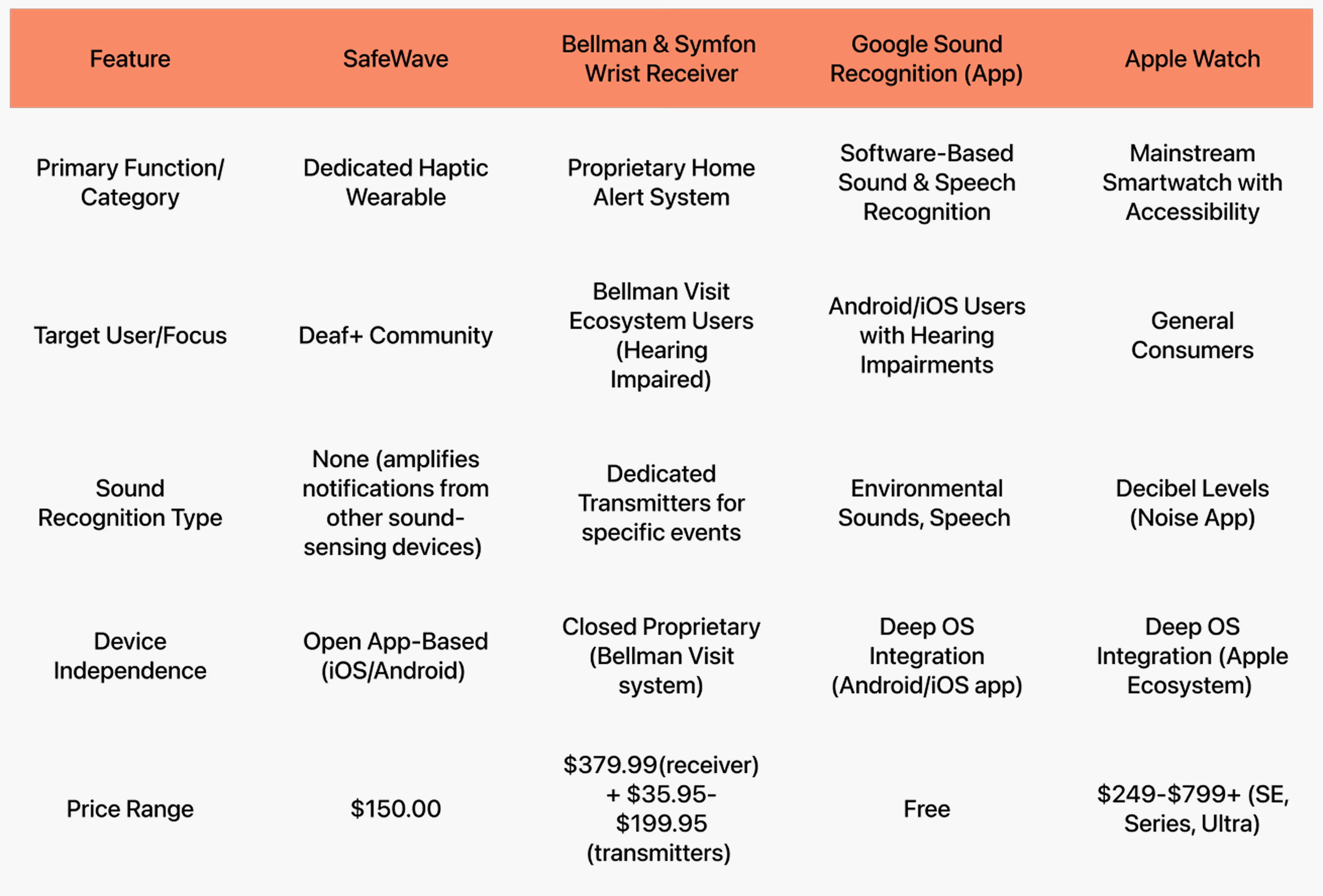

To understand the current landscape of assistive technologies and identify actionable opportunities, I conducted a rigorous audit of both direct and indirect competitors. I analyzed four primary solutions: SafeWave, the Bellman & Symfon Wrist Receiver, Google Sound Recognition, and the Apple Watch.

Rather than simply listing features, I synthesized these market constraints into direct, non-negotiable design requirements for Loom:

The Affordability Barrier: Mainstream smartwatches with accessibility features, like the Apple Watch, are cost-prohibitive ($249–$799+). Even dedicated systems like Bellman & Symfon require expensive base receivers and additional transmitters.

Strategic Requirement: Loom must be built using low-cost, open-source hardware to prioritize true accessibility.

The Danger of Ecosystem Lock-In: Many existing solutions fail users the moment they leave the house. Bellman & Symfon only functions within pre-paired home environments, and Apple/Google solutions rely heavily on constant smartphone connectivity.

Strategic Requirement: Loom must be a fully standalone wearable device. It needs to empower users to navigate dynamic, real-world settings (like public transport or outdoors) without relying on external smart home systems or phone pairing.

The Hardware/Software Disconnect: Software-based AI sound recognition (like Google’s app) is highly capable but lacks a dedicated, hands-free wearable component. Conversely, existing haptic wristbands often lack intelligent, customizable feedback.

Strategic Requirement: Loom must bridge this gap by delivering real-time, customizable haptic feedback directly to the body, keeping the user screen-free and situationally aware.

This audit made our value proposition clear: Loom would not try to compete as a lifestyle smartwatch. Instead, it would serve as a dedicated, affordable, and fully independent assistive tool.

The Core UX Challenge

Designing a Non-Visual Language

The most complex design challenge was not the hardware, but the interaction model. How do you communicate urgency, context, and environment using only a single vibration motor?

I developed two distinct Haptic Language Models to be A/B tested in future user sessions.

Model A: The Morse Code Approach

This model relies on structured pattern recognition. It translates environmental sounds into their corresponding Morse code letters.

Fire Alarm: Morse code for "F" (...-.).

Car Honk: Morse code for "C" (-.-.).

Doorbell: Morse code for "D" (-..).

Device On/Welcome: Morse code for "W" (.--).

Hypothesis: While this requires a learning curve for new users, it offers a highly structured and scalable language.

Model B: Intuitive Sensory Patterns

This model removes the cognitive load of memorization, aiming for an instinctive, biomimetic experience.

Fire Alarm: Intense, rapid, and repetitive vibrations to signal immediate danger.

Car Honk: A long, strong vibration to signal a sudden, large environmental change.

Doorbell: A smooth, rhythmic pulse indicating a non-emergency notification.

Device On/Welcome: Soft, warming vibration, welcoming users into a new way to experiencing auditory cues.

Hypothesis: This system provides a seamless, natural experience straight out of the box, drastically reducing the time needed to interpret the alert.

To complement the haptic system and combat uncertainty, I also mapped out a secondary feedback layer using LED indicators, providing visual confirmation of an alert in case the user removes the device.

The "Testable" Prototype

To prove the core interaction model, we built a working prototype using an Arduino Uno, a microphone sensor, and a coin vibration motor.

Using the ArduinoFFT library, we successfully programmed the device to detect specific audio frequencies and trigger corresponding haptic responses.

UX-Driven Hardware Decisions

We consciously kept the Arduino and breadboard external while housing the vibration motor inside a custom 3D-printed wearable case. This separation allowed us to simulate a realistic, ergonomic form factor on the wrist for accurate user testing, without being limited by the space constraints of our current, non-miniaturized circuitry.

Next Steps

Validation & Future Vision

Loom successfully proves that affordable, independent, hardware-based haptic alerts are feasible. However, a product for the hearing-impaired community cannot be finalized without them.

My immediate next steps include:

Co-Design Partnerships: Re-engaging with the NDCS and RNID to conduct empirical usability testing on the two proposed Haptic Language Models.

AI Integration: Moving from basic frequency detection (FFT) to implementing AI-powered sound recognition models to identify a wider, independent, more complex range of environmental cues.

Metrics for Success: Measuring ease of recognition, memorability, and perceived usefulness in real-world contexts during our user interviews.

Key Learnings & Takeaways

Accessibility is Core, Not an Add-On

This project reinforced my belief that designing for well-being, independence, and dignity is not a secondary featureit is central to our role as UX designers. Inclusive design has the power to genuinely improve lives, and assistive technology should enable users to feel seen, safe, and supported without feeling stigmatized.

Hardware Constraints Breed Creative UX

Working with physical computing introduced rigorous constraints. Troubleshooting delicate wiring, calibrating microphone sensitivity, and manually grinding 3D-printed cases to fit the vibration motor forced us to adapt quickly and think creatively. These limitations led to our strategic decision to separate the external Arduino "brain" from the wearable 3D-printed case, ensuring we could simulate a realistic ergonomic test without compromising the hardware functionality.

Designing the "Invisible" Experience

Creating a screen-free, haptic-first device completely shifted my perspective on interaction design. Without a visual UI to rely on, every decision had to be anchored by the question: "How would this feel to someone relying on it daily?". This empathy-driven approach was instrumental in identifying market gaps and shaping the development of our haptic language models.

The Value of the "Strategic Provocation"

While not receiving timely responses from hearing loss charities initially felt like a roadblock , it taught me the value of creating an empathy map based on secondary research to keep momentum going. More importantly, it taught me that a functional prototype is an incredible tool for communication. We now have a tangible, working provocation to bring to future co-design sessions, making user testing far more empirical and meaningful.